On the heels of my dieselpunk appearance in Rhonda Parrish’s most recent alliterative series, I have sold a steampunk story to her next pending installment: my story “Checkmate” will be featured in Clockwork, Curses, and Coal. Parrish is not only a gifted anthologist, but is becoming one of my favorite people to work with, and I encourage readers to check out her staggeringly creative array of books.

And speaking of clocks (or more specifically watches), I know I’m a little late to the party, but I finally got around to see HBO’s Watchmen series and absolutely loved it. From a creative perspective, it aligned with the same style of storytelling that I like to use: lots of seemingly unrelated shards that steadily assemble into one cohesive narrative. It also managed a difficult feat: taking the well-known and well-loved universe of Alan Moore and Dave Gibbons’ Watchmen and instead of rebooting or “reimagining” it, crafted a compelling continuation.

In the tradition of the original, the new Watchmen continues expanding the world and alternate timeline of the original, while using the opportunity to once again examine social and cultural issues. My jaw dropped when I saw they were opening the very first episode with a terrifying and all-too-relevant look at the 1921 Tulsa race riots massacre (the visceral depiction was not nearly as troubling as the droves of people who later said, “Did that really happen? How have I never heard of this before?”)

From that launching point, we are pulled into a thought-provoking and refreshing examination of the masked crimefighter trope and some parallaxes of perspective that had me hooked from beginning to end. Top-flight performances from Regina King, Louis Gossett Jr., Jean Smart, and a delightfully odd Tim Blake Nelson propel this fascinating alternate history. The writing is first-rate, the worldbuilding is marvelous and cleverly handled, and it’s all served up with an appropriately otherworldly soundtrack from the Reznor-Ross duo. Kudos to the entire creative team. Highly recommended.

#

I’ve written elsewhere that I think social media is the worst development since smallpox: a javelin-thrust into the heart of rationality and civil discourse. Since there’s obviously no putting that genie back into its toxic little bottle, we need to at least exercise better social media hygiene, because our lives and Republic hinge upon it.

The staggering disinformation campaign of 2016 was not the start of this, but what’s troubling is that few people seem aware that it never ended. Some of the articles mentioned in this very important article were shared by people I know. These articles were created and distributed by troll-farms with the intent of attacking scientific and medical credibility. There’s a literal body count attached to this, beyond exacerbating our lunatic tribalism. There are many things in life we can’t control, but this is something we have power over.

These disinformation brokers are largely behind the “mask debate”. They fuel vaccine conspiracy theories, including eager promotion of a certain Video That Shall Not Be Named. Disinformation brokers understand that our habit of insta-sharing articles–coupled with our cultural conceit that “all opinions are equal”– is easy to hijack. They form a calculated and organized campaign trying to undermine society, health, the political and cultural landscape, and human lives, and they’ve taken it beyond Orwell. There’s an entire industry looking to use us as carriers for their disease… literal and figurative.

A chilling article from Rolling Stone last year warned: “Professional disinformation isn’t spread by the account you disagree with–quite the opposite. Effective disinformation is embedded in an account you agree with.” They try to grow their audience, pretending to be one of the tribe, and then turn the spigot on lies and divisiveness. “Masks are stealing your freedom! Masks are spreading the virus! COVID was planned!” Professional trolls are the Grima Wormtongue of the Internet. It’s up to us not to be Theoden.

I encourage my fellow humans to rally together on changing this. Stop sharing articles you haven’t read and vetted yourself. Consider the sources. Read the date (something posted five years ago isn’t really “news”). “Doing research” needs to be more than a euphemism for ping-ponging around bubbles of confirmation bias.

We already have one virus tearing up the world. Disinformation spreads faster, is more dangerous, yet is something we have the power to stop.

#

Pac-Man just turned 40 years old, and Smithsonian Magazine has a nice article about the history and impact of everyone’s favorite maze-running 1980s-era Eater of Souls.

The first video game I played was an Asteroids arcade cabinet in a local Carvel ice cream parlor. This was around the time when I wanted to be an astronaut, thanks to my experience riding an astroliner carnival ride which set me on the path to becoming a sci-fi writer.

Shortly after this digital baptism, my parents bought me my first console: a wood-paneled Atari 2600. It’s no surprise that a great deal of that early catalogue was 8-bit sci-fi: Berserk, Missile Command, Galaxian, Atlantis, Yar’s Revenge… but my favorite game was David Crane’s trailblazing classic Pitfall II: Lost Caverns.

It wasn’t surprising, really, as I was on a fresh high from seeing Raiders of the Lost Ark. But Pitfall II was a significant and underappreciated milestone in the gaming industry. While the majority of Atari games were single-screen puzzles, Pitfall II presented a sprawling multi-screen adventure with dynamically changing music, save points, and a main character who could run, jump, climb, ride balloons to access new areas, and even swim (this latter talent was notably lacking in many, many game heroes who followed.) It was also an Activision game, noted for bright graphics and quality games. They also spearheaded third-party game development.

In short, it was an evolution: not just a collection of puzzles, but the creation of a fully interactive virtual world. Not just running through a maze, or a hopping around construction site commandeered by a giant gorilla, but having a complete adventure between a topside jungle and subterranean ocean (and I know other games offered this too, from Spike’s Peak to Adventure, but no one did it as well or as vividly as Lost Caverns). To this day, I still whistle its memorable theme song.

I hung onto the Atari’s aging hardware for a while, but that changed one afternoon when I visited a friend and feasted my eyes on the Nintendo Entertainment System. The game my friend showed me was Konami’s Castlevania.

Castlevania felt like someone had made a game just for me. Bright, diverse graphics and a stupendous soundtrack underscored a journey into a haunted castle crammed with monsters from mythology and classic early horror films. I became obsessed, and could regularly speed-run through the game with a single life. (My supreme achievement was being able to go through another Konami game–Contra–without dying while using the default gun. No, seriously.)

The NES got me through a very difficult time in my life. With parents on the ragged cusp of divorce, crippling financial problems that forced me into a new school, and a growing sense of personal isolation, I needed an active form of entertainment. I still devoured books (my favorite series was the marvelous Fighting Fantasy Gamebooks, which are also an active form of entertainment) and absorbed hundreds of movies, but video games let me be in charge of a temporary destiny. I rifled through Legendary Wings, Blaster Master, the Megaman series, Mike Tyson’s Punchout, the Super Mario games, Lifeforce, Trojan, Zelda, Metroid… universe after universe.

For a short time in college, I took a break from gaming. Nonetheless, I managed to pick up an SNES to spend snowed-in, pizza-chomping evenings with friends, trading off the controller to work our way through Super Castlevania IV. But those were the years of poetry and music and girlfriends and the genesis of my lifelong circle of friends. I was in a transcendentalist phase seasoned with romanticism: Aldous Huxley, Thoreau, Emerson, Byron, Pope. Gaming had faded into background noise.

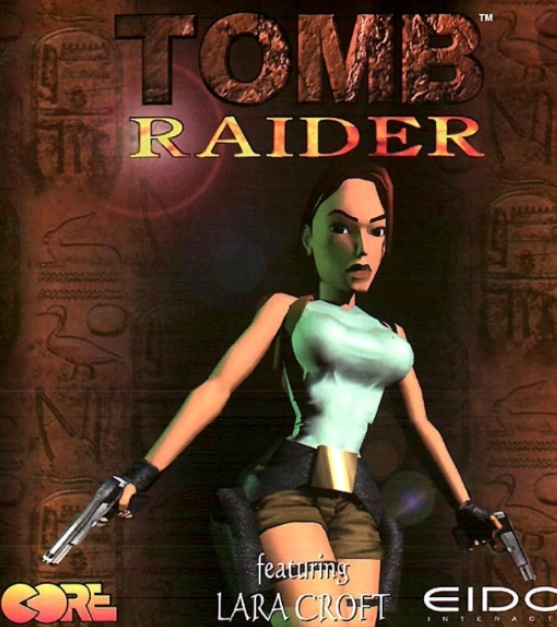

Then, in 1996 while visiting a friend’s dorm room, I saw the PlayStation for the first time. The game my friend was playing was Tomb Raider.

It was like Pitfall II for a then-modern world. Remember what I said about how Castlevania seemed tailor-made for me? Tomb Raider had a similar impact. Here was one of the pioneers of third-person platforming, allowing the player to explore intricate underground environments with traps and treasure and surprises galore, courtesy of gaming’s biggest star since Pac-Man.

The fifth and sixth generation of video game consoles saw a major advancement toward the idea of games as art. Metal Gear Solid delivered a story worthy of a spy novel with a smart cinematic sensibility. Deus Ex offered a cyberpunk noir as prescient as anything handed down from Gibson. Beyond Good and Evil showcased a world of Miyazaki-like whimsy, with cell-shaded animation bolstered by a distinctly French aesthetic. Jade Empire put BioWare on my radar, trailblazing bold new narrative territory.

And what to say about today? Games offer something unique in the world of art, as they directly engage the participant. The moral quandaries and choices in games like Mass Effect, the narrative complexity and quality in The Witcher 3, the top-flight performances and immersion of Red Dead Redemption 2… this is what games have been evolving to. This is the potential I glimpsed way back in Pitfall II.

In the last couple years, I played VR games for the first time since the clunky Dactyl Nightmare of the mid-90s (which featured a headset heavy enough to crush cartilage). VR is growing past its gimmicky stage, and is clearly the next avenue of game evolution.

And from there? Augmented Reality? Brain-Machine Interfaces?

Well, yes. And I certainly don’t think all of it will be a positive thing, as I’ve occasionally written about.